Anthropic’s Latest Claude Model Is Prompting Questions About What “Same Price” Really Means

When Anthropic introduced Claude Opus 4.7 this month, the company presented the model as a notable advance in coding, long-running autonomous tasks and visual reasoning — a stronger version of its flagship system without a change to the headline price.

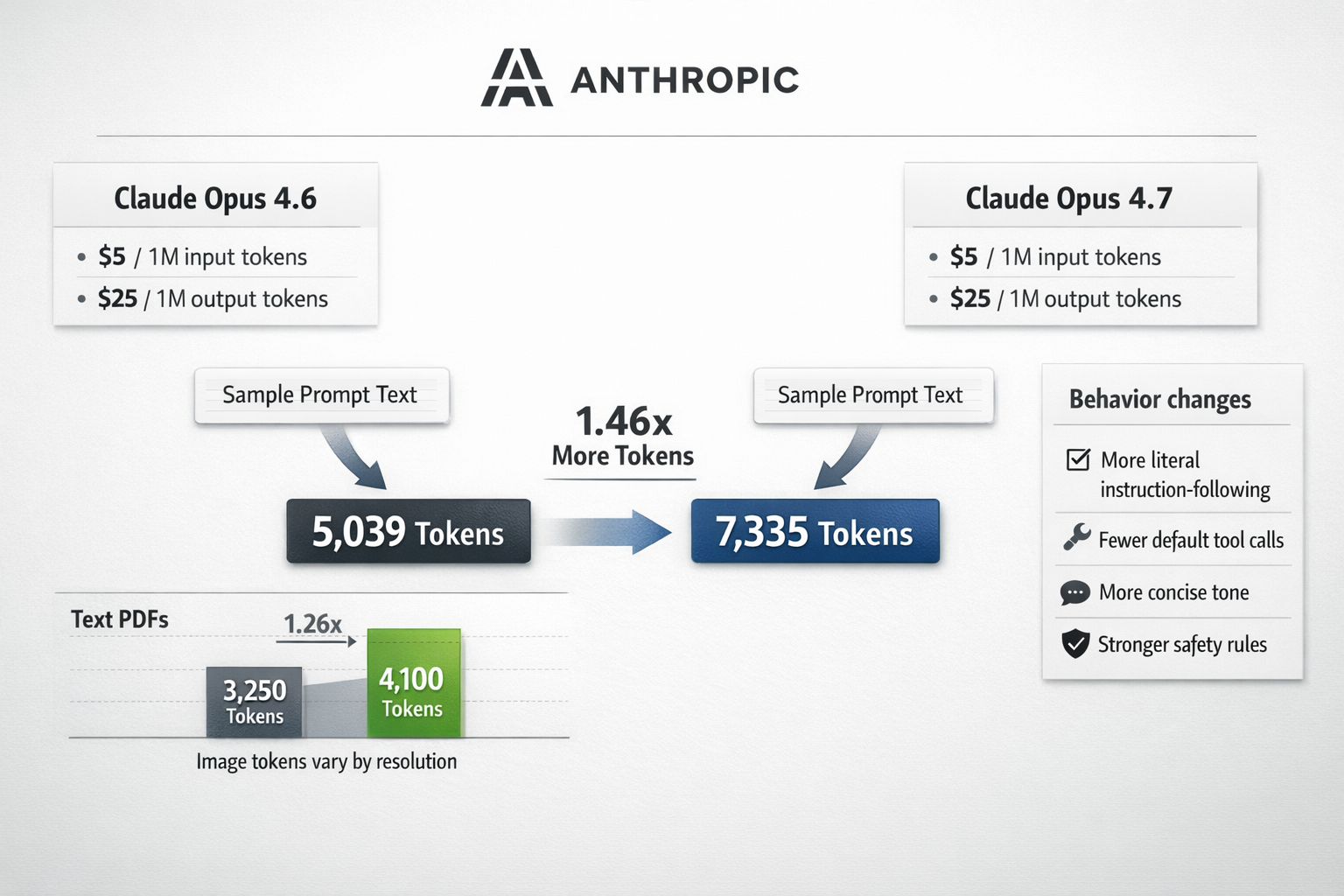

On paper, that remains true. Opus 4.7 costs $5 per million input tokens and $25 per million output tokens, the same list price as Opus 4.6.

But in the days since the release on April 16, a more complicated picture has begun to emerge. Developers examining the model’s underlying mechanics say that while the sticker price is unchanged, actual usage costs may rise meaningfully because the model now breaks the same input into more billable tokens. At the same time, close readings of Anthropic’s newly published system prompt suggest the company has also made important changes to how Claude is instructed to behave — including when to act, when to call tools and how firmly to respond in sensitive areas.

Together, those shifts are fueling a broader debate over a question that has become increasingly central in the artificial intelligence industry: when a model is upgraded, what exactly has changed — intelligence, behavior, price or all three at once?

A Cost Increase Hidden in Tokenization

Anthropic disclosed in its announcement that Opus 4.7 uses an updated tokenizer, the software layer that converts text and images into the chunks, or tokens, on which a model is billed. The company said the same input could map to roughly 1.0 to 1.35 times more tokens depending on content type.

That warning drew immediate attention from developers who rely on Claude for coding and agentic workflows, where prompts can be large, repetitive and expensive even before output is generated.

Independent testing has suggested the increase can exceed Anthropic’s broad guidance in some cases. Simon Willison, an independent developer and writer who tracks model behavior closely, updated a public token-counting tool to compare how the same input is tokenized across Claude versions. In one test using the published Opus 4.7 system prompt as input, he found that Opus 4.7 counted 7,335 tokens compared with 5,039 for Opus 4.6 — about 1.46 times as many.

At unchanged per-token rates, that would translate into a significantly higher bill for the same text.

The effect appears to vary widely by medium. Willison found only a modest increase, roughly 1.08 times, on a 30-page text-heavy PDF. And an apparent tripling in image token counts turned out to reflect Opus 4.7’s ability to process much higher-resolution images than prior versions, rather than a simple across-the-board increase. When he resized an image to a smaller resolution, token usage was nearly identical between the two models.

Still, for customers with large prompt templates, persistent context windows or coding agents that repeatedly resend instructions, even a 10 to 40 percent increase in tokenization can materially affect spending.

That matters because enterprises often budget around list prices, not around how a tokenizer may reinterpret their traffic. In practice, “same price” can mean something quite different from “same cost.”

Why the Changes Matter Beyond the Invoice

The concern is not merely that Opus 4.7 might cost more. It is that developers may have to rethink whether the new model is a drop-in replacement at all.

Anthropic has described the release as more literal in following instructions, somewhat less inclined to make tool calls by default and more direct in tone. The company has also said that “thinking” content is omitted by default unless users explicitly request it, a change that may alter how products surface reasoning or explain actions to end users.

For teams that built workflows around Opus 4.6’s tendencies — how often it asked clarifying questions, how aggressively it used tools, how verbose it was in caveats — those behavioral adjustments can be as consequential as benchmark gains.

In software engineering agents, small changes in prompt interpretation can affect whether a model proceeds autonomously or pauses for user input. In customer-facing products, a more concise or more literal assistant can improve clarity in some cases while feeling abrupt in others. And in budget-sensitive deployments, if the model both tokenizes more aggressively and produces longer or higher-effort outputs, costs can rise from both ends of the interaction.

The result is that upgrading to a newer model increasingly resembles a product migration, not a simple version bump.

A Rare Window Into How a Model Is Steered

Anthropic occupies an unusual position in the industry because it publishes the system prompts used for Claude’s chat product, providing outsiders with a partial but revealing look at how the company directs model behavior. Most major AI labs do not make that layer public.

The newly posted Opus 4.7 prompt, dated April 16, shows a series of revisions that independent researchers and developers quickly began diffing against the Opus 4.6 version. Those changes suggest Anthropic is sharpening not only what Claude can do, but how it should decide to do it.

Among the most notable additions is stronger child-safety language, now wrapped in a dedicated critical instruction section. The prompt also adds explicit guidance that once Claude refuses a request on child-safety grounds, it should handle later turns in the same conversation with “extreme caution.”

Another prominent change pushes Claude toward action over interrogation. The prompt now says that when a request leaves minor details unspecified, the user usually wants a reasonable attempt immediately, not a round of preliminary questions. If a tool can resolve ambiguity — by searching, checking a calendar or discovering available capabilities — Claude is instructed to use the tool rather than ask the user to do the work.

That instruction is paired with a notable new requirement: before claiming it lacks access to information or capabilities, Claude is told to use a tool called “tool_search” to check whether a relevant tool is available but not yet surfaced.

The prompt also encourages brevity, directing Claude to keep responses focused and concise and not overwhelm users with long disclaimers. Other new language addresses disordered eating, telling the model not to provide precise diet, nutrition or exercise targets even if framed as health advice. And in a sign of the increasingly politicized stress tests faced by major chatbots, the prompt now says Claude may decline to answer complex or contested issues with a simple yes-or-no response and instead explain why nuance is warranted.

Some instructions present in Opus 4.6 were also removed. A prior note telling Claude to avoid certain words and emotive stage directions disappeared, suggesting either a shift in style priorities or confidence that the newer model no longer needs that corrective guidance. A special instruction clarifying that Donald Trump is the current president of the United States — added earlier to compensate for knowledge-cutoff-related errors — is also gone, apparently because the newer model’s knowledge is now current enough not to require the patch.

The Limits of Transparency

Even Anthropic’s openness has limits.

While the company publishes the main system prompt, it does not disclose the full set of tool descriptions and metadata supplied internally to Claude. That matters because tool definitions can strongly influence what an assistant believes it can do and when it chooses to do it.

Developers who have probed Claude directly report that the chat product appears to have access to a wide menu of capabilities, including web search, web fetching, file creation, conversation search, weather, maps and a registry search for model context protocol tools. But because those tool descriptions are not formally published alongside the system prompt, outside observers cannot fully disentangle whether a behavioral change comes from the underlying model, the prompt, or the hidden interface layer wrapped around it.

That ambiguity is becoming more important as AI companies present models as increasingly autonomous agents rather than pure text generators. If a model appears more capable, the gain may reflect not just stronger reasoning but more aggressive orchestration. If it appears more cautious, the explanation may lie in policy text rather than in the weights.

A Broader Industry Tension

The scrutiny surrounding Opus 4.7 reflects a wider tension in the AI market. Model makers are racing to show improvements on coding, agentic work and multimodal tasks, while customers are learning that practical adoption depends on less glamorous details: token accounting, prompt compatibility, tool behavior and safety policy.

For buyers, benchmark wins are only part of the equation. The more immediate questions are often operational. Will this model fit within our budget? Will it change user experience in subtle ways? Will our prompts need to be rewritten? Will our guardrails still work?

Anthropic’s release underscores how those questions can no longer be treated as secondary.

Opus 4.7 may well deliver the reliability, coding autonomy and vision improvements Anthropic advertises. But the early reaction suggests that many users are now looking past model scorecards to something more concrete: the real cost of running the system, and the growing amount of invisible product policy embedded inside it.

For an industry that often sells progress in terms of larger capabilities, that may be the more consequential shift.

Sources

Further reading and reporting used to add context:

- https://www.itpro.com/security/anthropic-claude-opus-claude-mythos-cyber-capabilities

- https://the-decoder.com/first-token-counts-reveal-opus-4-7-costs-significantly-more-than-4-6-despite-anthropics-flat-pricing/

- https://support.claude.com/en/articles/12138966-release-notes

- https://www.reddit.com/r/ClaudeAI/comments/1so6s33/opus_46_silently_removed_from_claude_desktops/

- https://platform.claude.com/docs/id/release-notes/overview

- https://platform.claude.com/docs/en/release-notes/overview

- https://letsdatascience.com/news/anthropic-revises-claude-opus-system-prompt-between-46-and-4-1d570524

- https://platform.claude.com/docs/en/docs/resources/model-deprecations

- https://www.reddit.com/r/ClaudeCode/comments/1sn6ni9/opus_47_has_a_new_tokenizer_same_token_but_1135x/

- https://simonwillison.net/2026/apr/18/opus-system-prompt/

- https://claudelog.com/claude-news/

- https://www.reddit.com/r/ClaudeAI/comments/1snv4yq/claude_code_tip_10_seconds_fix_to_avoid_the_opus/

- https://www.reddit.com/r/ClaudeAI/comments/1so22ac/opus_47s_new_tokenizer_costs_up_to_35_more_i/

- https://www.reddit.com/r/ClaudeAI/comments/1snkvgd/opus_47_and_generate_permission_allowlist_from/

- https://www.claudecodecamp.com/p/i-measured-claude-4-7-s-new-tokenizer-here-s-what-it-costs-you

- https://platform.claude.com/docs/ru/release-notes/overview

- https://medium.com/newsbusinesses/introducing-claude-opus-4-7-anthropics-most-capable-ai-model-yet-6a9220be39d2

- https://www.reddit.com/r/ClaudeCode/comments/1sn98rj/opus_47_is_out_reading_the_migration_notes_before/

- https://cybernative.ai/t/claude-opus-4-7-and-the-tokenization-tax-how-a-hidden-grid-raised-prices-by-35/38583

- https://www.itpro.com/technology/artificial-intelligence/anthropic-promises-opus-level-reasoning-claude-sonnet-4-6-model-at-lower-cost

- https://www.anthropic.com/news/claude-sonnet-4-5/

- https://docs.anthropic.com/en/docs/about-claude/models/migrating-to-claude-4

- https://docs.anthropic.com/en/release-notes/claude-apps

- https://docs.anthropic.com/zh-CN/docs/about-claude/pricing

- https://docs.anthropic.com/en/release-notes/api

- https://docs.anthropic.com/es/docs/about-claude/pricing

- https://www.anthropic.com/research/deprecation-updates-opus-3

- https://docs.anthropic.com/en/docs/about-claude/model-deprecations

- https://docs.anthropic.com/en/docs/models-overview

- https://docs.anthropic.com/en/docs/about-claude/models/all-models

- https://docs.anthropic.com/ru/docs/about-claude/pricing

- https://www.anthropic.com/transparency/model-report/

- https://www-cdn.anthropic.com/14e4fb01875d2a69f646fa5e574dea2b1c0ff7b5.pdf

- https://assets.anthropic.com/m/4c024b86c698d3d4/original/Claude-4-1-System-Card.pdf

- https://www-cdn.anthropic.com/08ab9158070959f88f296514c21b7facce6f52bc.pdf

- https://www-cdn.anthropic.com/6a5fa276ac68b9aeb0c8b6af5fa36326e0e166dd.pdf

- https://www-cdn.anthropic.com/4263b940cabb546aa0e3283f35b686f4f3b2ff47.pdf?s=09

- https://assets.anthropic.com/m/64823ba7485345a7/Claude-Opus-4-5-System-Card.pdf

- https://platform.claude.com/docs/de/release-notes/system-prompts

- https://platform.claude.com/docs/id/release-notes/system-prompts

- https://platform.claude.com/docs/ko/release-notes/system-prompts

- https://platform.claude.com/docs/zh-CN/release-notes/system-prompts

- https://platform.claude.com/docs/en/prompt-library/library

- https://platform.claude.com/docs/en/agents-and-tools/tool-use/computer-use-tool

- https://platform.claude.com/docs/fr/resources/prompt-library/cite-your-sources

- https://platform.claude.com/docs/es/about-claude/models/migrating-to-claude-4

- https://platform.claude.com/docs/de/resources/prompt-library/portmanteau-poet

- https://platform.claude.com/docs/ja/release-notes/system-prompts

- https://platform.claude.com/docs/pt-BR/about-claude/models/migrating-to-claude-4

- https://simonwillison.net/2026/Apr/20/claude-token-counts/

- https://www.reddit.com/r/ClaudeAI/comments/1soetwy/opus_47s_tokenizer_increases_measured_higher_than/

- https://www.reddit.com/r/openclaw/comments/1spxo7t/i_compared_my_actual_token_usage_on_opus_46_vs_47/

- https://www.reddit.com/r/ClaudeAI/comments/1sn8ovi/opus_47_is_50_more_expensive_with_context/

- https://www.reddit.com/r/better_claw/comments/1spxouf/i_compared_my_actual_token_usage_on_opus_46_vs_47/

- https://www.nxcode.io/resources/news/claude-opus-4-7-complete-guide-features-benchmarks-pricing-2026

- https://tokenmix.ai/blog/claude-opus-4-7-review-pricing-benchmark

- https://www.reddit.com/r/ClaudeCode/comments/1sn8q7h/opus_47_is_50_more_expensive_with_context/

- https://www.reddit.com/r/ClaudeAI/comments/1snlqy3/psa_for_max_users_opus_47_has_a_new_tokenizer/

- https://aggyai.com/

- https://www.reddit.com/r/claude/comments/1snj9yn/anthropics_opus_47_tokenizer_change_is_a_hidden/

- https://www.reddit.com/r/ClaudeCode/comments/1sp6u4m/47_is_a_token_hog/

- https://techsy.io/blog/claude-opus-4-7-whats-new

- https://www.reddit.com/r/rajistics/comments/1sq21qm/opus_47_new_tokenizer/

- https://aiengineerguide.com/til/anthropic-claude-opus-4-7-uses-more-tokens/

- https://tygartmedia.com/opus-4-7-vs-gpt-5-4-vs-gemini-3-1-pro/

- Introducing Claude Opus 4.7 \ Anthropic

- System Prompts – Claude API Docs

Leave a Reply