Google Tries to Sell the Whole AI Stack

At its Cloud Next conference on Tuesday, Google delivered a broad new pitch to corporate customers: stop thinking of artificial intelligence as a chatbot bolted onto office software, and start treating it as an operating layer for the company itself.

The company called that vision the “agentic enterprise,” and it used the event to introduce an interlocking set of products meant to make the case. There were new eighth-generation Tensor Processing Units, or TPUs, split into one system designed for training frontier models and another tuned for fast, low-latency inference. There was a reworked enterprise agent platform built around Gemini. There was a new contextual AI layer for Workspace. And there were updated research agents, including Deep Research Max, intended to automate complex information gathering across the web and private corporate data.

The message was less about any single product than about vertical integration. Google is arguing that the next phase of enterprise AI will belong not to stand-alone models, but to companies that can provide the chips, networking, cloud infrastructure, orchestration tools, productivity software and domain-specific applications in one package.

That is a significant shift in emphasis. For much of the generative AI boom, technology companies sold businesses on copilots and chat interfaces. Google’s presentation suggested it now wants to move beyond assisting workers with prompts and summaries toward systems that can reason through tasks, plan multistep actions and carry out work across business software and data environments.

A Hardware Push for AI at Scale

Central to that argument was new infrastructure.

Google introduced its eighth-generation TPUs in two versions: TPU 8t, aimed at training large AI models, and TPU 8i, built for serving models with lower latency. The company also tied the chips to broader data-center infrastructure, including its Virgo networking technology, underscoring that it sees compute, networking and software as one enterprise offering rather than separate layers.

That focus reflects a reality of the current AI market. As businesses move from experimenting with chatbots to deploying AI systems across departments, demand is shifting from occasional model access to continuous, large-scale computing capacity. The economics of that transition could determine which cloud provider wins the next phase of enterprise spending.

Google said customer API usage has climbed to more than 16 billion tokens per minute, a figure meant to show both the scale of demand and the company’s need to keep expanding infrastructure. It also said that just over half of its machine-learning compute investment this year is expected to go to cloud customers and partners, a notable signal that Google is trying to prove it is not building AI capacity solely for its own products.

The company is hardly alone. Microsoft, Amazon and OpenAI, along with chipmakers like Nvidia, are all vying to define the infrastructure layer of enterprise AI. But Google’s latest move was notable for how explicitly it tied custom silicon to the application layer above it.

From Copilots to Agents

The heart of Google’s strategy is the idea that businesses want AI agents, not just assistants.

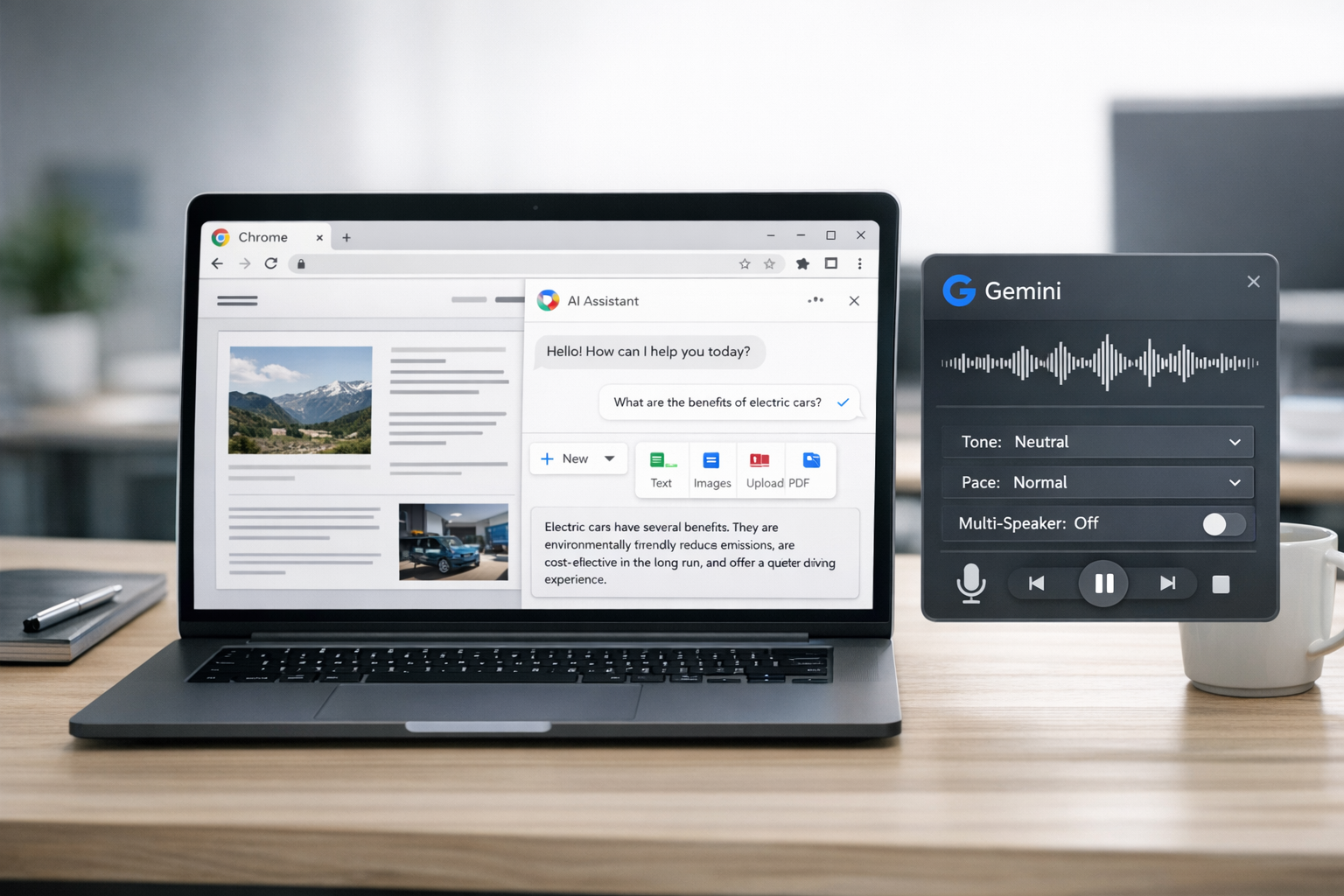

Its Gemini Enterprise Agent Platform is intended to let companies build and manage systems that can take action across workflows, using business context and tools rather than simply answering questions. In Workspace, Google introduced what it described as an intelligence layer meant to draw on organizational context and make its office software more responsive to the needs of teams and individual workers.

The updated Deep Research products push that concept further. Deep Research and Deep Research Max are designed to automate more complex investigations, drawing from web sources and, increasingly, proprietary enterprise data. Developers can connect specialized sources, including financial feeds, through the Model Context Protocol, or MCP, which has emerged as an important way to link models to external tools and information.

The ambition is clear: an analyst, lawyer, consultant or operations manager would no longer use AI merely to draft a memo or summarize a meeting, but to conduct broad research, synthesize findings from internal and external sources, and return with a structured output ready for review.

That vision remains aspirational in some important respects. Google promoted performance gains for its research agents, but outside observers still have limited visibility into how those benchmarks were produced and how reliably such systems perform in real, high-stakes settings. Autonomous research tools can be compelling in demonstrations while remaining error-prone in practice, especially when they are asked to evaluate ambiguous, rapidly changing or specialized information.

AI for Maps, Media and Infrastructure

Google also used the event to showcase a more industry-specific side of its AI strategy, especially in imaging and geospatial analysis.

Among the new tools were systems that let creative professionals place AI-generated scenes into real Street View environments, potentially streamlining location scouting and previsualization for film and media work. Google also presented products aimed at city planning, infrastructure analysis and remote sensing, saying some satellite image analysis tasks that once took weeks could be completed in minutes. It also highlighted models that can identify objects such as bridges and power lines.

Those announcements may have seemed far from Workspace and enterprise agents, but they fit neatly into Google’s larger case. Few companies possess both a large cloud business and an archive of mapping, Street View and satellite data extensive enough to build such tools. By turning those assets into enterprise AI products, Google is trying to show that its advantage lies not just in foundation models, but in the combination of models with unique data, cloud infrastructure and existing software ecosystems.

For customers in industries like logistics, urban planning, media and utilities, that matters. The enterprise AI market is beginning to split between general-purpose assistants and tools tailored to sector-specific workflows. Google appears to want both.

Why the Push Matters Now

The timing is important.

The first years of the generative AI boom were dominated by experimentation. Companies tested chatbots, bought coding assistants and ran pilots inside customer support or marketing teams. But many executives have become more demanding. They want systems that can be embedded into operations, tied to internal data and justified in terms of productivity or revenue, not just novelty.

Google’s Cloud Next announcements were a direct response to that shift. The company is presenting AI as a full enterprise platform, one that stretches from the data center to the employee desktop and into specialized industry applications.

That strategy could appeal to businesses looking to simplify procurement and reduce integration headaches. But it also raises familiar concerns about concentration and lock-in. The more of the AI stack a company buys from one provider — chips, models, orchestration, productivity tools, security and data connectors — the harder it may become to switch later.

There are also practical questions Google did not fully resolve. Some of the newly announced hardware and platform features will need to prove they can scale broadly. Customers will want evidence that Google’s systems offer a meaningful advantage in cost or performance over rival clouds and model providers. And companies considering autonomous research or workflow agents will need to weigh speed against reliability, governance and accountability.

Still, Google’s message at Cloud Next was unmistakable. The company is no longer merely trying to show that it can compete in AI models. It is trying to persuade businesses that the next era of enterprise computing will be built around agents — and that Google should supply the entire foundation.

Sources

Further reading and reporting used to add context:

- Google Cloud Next 2026: News and updates

- Introducing Deep Research and Deep Research Max

- https://blog.google/innovation-and-ai/infrastructure-and-cloud/google-cloud/tpus-8t-8i-cloud-next/

- https://blog.google/intl/zh-tw/products/cloud/the_dawn_of_-the_agentic_enterprise_at_next_26/

- Two chips for the agentic era

- https://blog.google/innovation-and-ai/infrastructure-and-cloud/google-cloud/cloud-next-2026-sundar-pichai/

- https://blog.google/products/google-cloud/ai-business-trends-report-2026/

- https://blog.google/innovation-and-ai/models-and-research/gemini-models/gemini-3-deep-think/

- https://blog.google/intl/es-419/actualizaciones-de-producto/en-la-nube/bienvenidos-a-google-cloud-next-26/

- https://blog.google/products-and-platforms/products/maps/ask-maps-immersive-navigation/

- https://blog.google/intl/fr-ca/produits/dans-le-nuage/google-cloud-annonce-lavenement-de-lentreprise-agentique-a-next-26/

- https://blog.google/intl/pt-br/produtos/nas-nuvens/bem-vindos-ao-google-cloud-next-26/

- https://blog.google/documents/213/AI_Sprinters_Report.pdf

- https://blog.google/documents/159/MBG_Podcast_S2E5_Android_Security.pdf

- https://deepmind.google/gemini/gemini_1_report.pdf

- https://blog.google/documents/37/How_Google_Fights_Disinformation.pdf

- https://blog.google/documents/96/Google-Whitepaper_V5.pdf

- https://blog.google/products-and-platforms/products/earth/google-earth-20-years-timeline/

- https://blog.google/products-and-platforms/products/earth/only-clear-skies-on-google-maps-and/

- https://blog.google/products-and-platforms/products/maps/how-do-satellite-images-work/

- https://blog.google/products-and-platforms/products/maps/google-maps-101-how-imagery-powers-our-map/

- https://blog.google/products/maps/street-view-15-new-features/

- https://blog.google/innovation-and-ai/technology/research/gemini-help-communities-predict-crisis/

- https://blog.google/products-and-platforms/products/earth/special-stories-from-15-years-of-google-earth/

- https://blog.google/products-and-platforms/products/maps/create-your-own-street-view-imagery-new-360-cameras/

- https://blog.google/products-and-platforms/products/earth/3-imagery-updates-to-google-earth-and-maps/

- https://www.blog.google/documents/53/FINAL_External_Distributed_Work__Google_Playbooks.pdf

- https://blog.google/documents/83/information_quality_content_moderation_white_paper.pdf

- https://blog.google/innovation-and-ai/technology/ai/google-ai-updates-march-2026/

- https://blog.google/products/gemini/the-global-ai-film-award-is-now-accepting-applications/

- https://blog.google/company-news/outreach-and-initiatives/google-org/sundance-institute-ai-education/

- https://blog.google/company-news/inside-google/around-the-globe/google-middle-east/winner-of-the-global-ai-film-award/

- https://blog.google/technology/ai/google-flow-veo-ai-filmmaking-tool/

- https://blog.google/products/workspace/cloud-next-2025-workspace-gemini/

- https://blog.google/innovation-and-ai/products/google-ai-updates-january-2026/

- https://blog.google/products/google-cloud/google-cloud-next-25-recap/

- https://blog.google/products-and-platforms/products/workspace/cloud-next-2025-workspace-gemini/

- https://blog.google/documents/93/Google_Digital_Sprinters_Whitepaper_V8.pdf

- https://blog.google/products-and-platforms/products/search/personal-intelligence-expansion/

- https://blog.google/intl/pt-br/produtos/nas-nuvens/cloud-next-2026-sundar-pichai/

- https://blog.google/documents/53/FINAL_External_Distributed_Work__Google_Playbooks.pdf

- https://blog.google/documents/77/Googles_submission_to_EC_AI_consultation_1.pdf

- https://the-decoder.com/googles-new-ai-tools-put-film-scouting-in-street-view-and-promise-to-cut-weeks-of-satellite-analysis-to-minutes/

- https://www.machinebrief.com/news/googles-ai-imagery-revolution-from-street-view-to-satellites-5fgi

- https://www.sciencedirect.com/science/article/pii/S0924271626001693

- https://www.geoweeknews.com/news/google-maps-platform-adds-ai-powered-imagery-tools-with-implications-for-geospatial-workflows

- https://www.newsbytesapp.com/news/science/google-adds-generative-ai-to-mapping-tools-for-street-view/tldr

- https://scrap.io/google-maps-satellite-view-street-view-guide

- https://arxiv.org/abs/2603.20697

- https://blog.thefix.it.com/how-recently-is-google-earth-updated-the-complete-2026-guide/

- https://mewayz.cloud/en/blog/google-street-view-in-2026

- https://www.secnews.gr/en/704232/google-maps-google-earth-ai-dinatotites/

- https://iblead.com/en/blog/how-google-maps-works-technical-secrets

- https://medium.com/google-earth/from-the-product-lead-whats-coming-to-google-earth-in-2026-3bf87320dfb9

- https://ai.google/earth-ai

- https://www.reddit.com/r/googleearth/comments/1pvbn79/is_there_a_way_to_know_when_the_next_set_of/

- https://www.reddit.com/r/googleearth/comments/1sldwvn/does_anyone_know_if_theres_any_2026_satellite/

- https://www.reddit.com/r/GaussianSplatting/comments/1s3afpo/if_google_is_not_secretly_using_all_our_photos_on/

- https://makeabilitylab.cs.washington.edu/media/publications/Froehlich_StreetviewaiMakingStreetViewAccessibleUsingContextAwareMultimodalAi_UIST2025.pdf

- https://openreview.net/pdf?id=E7JzkZCofa

- https://www.reddit.com/r/WTFisAI/comments/1scysuv/wtf_is_going_on_sunday_2_this_weeks_ai_news_in_2/

- https://www.swcp.com/wp-content/uploads/2025/11/portal-202511.pdf

- https://journals.sagepub.com/doi/pdf/10.1177/08944393231178604?download=true

- https://techxplore.com/news/2025-05-ai-facade-google-street-view.pdf

- https://static.googleusercontent.com/media/research.google.com/en//pubs/archive/36899.pdf

- https://www.reddit.com/r/google/comments/1ljgy6c

Leave a Reply