Google Pushes A.I. Deeper Into the Browser and Gives Gemini a More Human Voice

Google is widening its consumer A.I. footprint on two fronts at once, embedding its chatbot-style search more tightly into Chrome while extending Gemini with a new text-to-speech model designed to sound more expressive, controllable and conversational.

The moves, announced on consecutive days in mid-April, suggest a broader strategy taking shape inside Google: to make A.I. less of a destination users visit and more of a layer that sits continuously across browsing, searching and speaking.

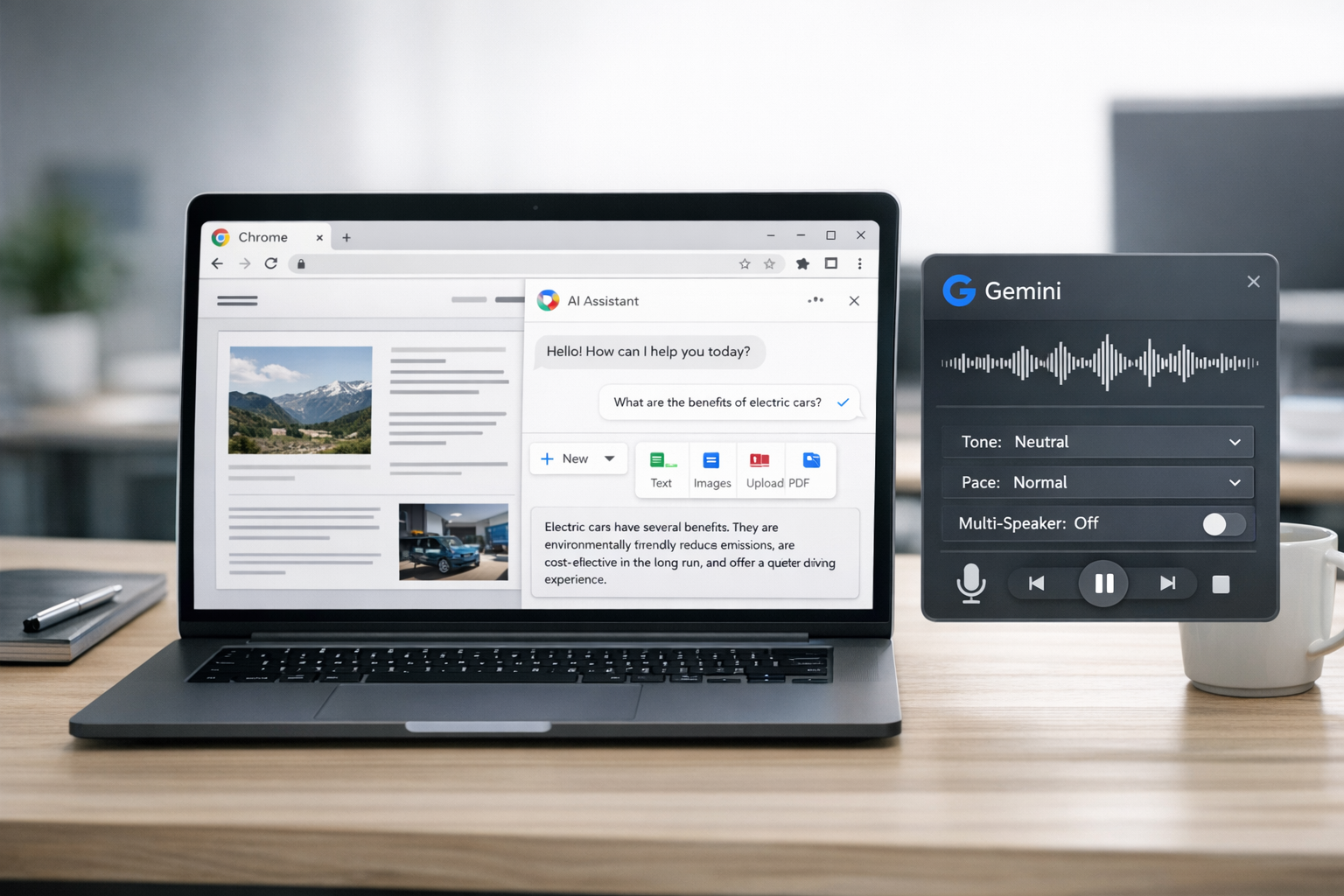

On April 16, Google said it was updating A.I. Mode in Chrome so desktop users in the United States can keep the feature open alongside webpages as they browse, ask follow-up questions without bouncing between tabs, and pull recent tabs, images and PDFs into the conversation through a new plus-menu. A day earlier, the company introduced Gemini 3.1 Flash TTS, a speech model that lets developers direct delivery through plain-language prompts, including tone, pacing, style and multi-speaker dialogue.

Individually, the products address different habits: how people navigate the web, and how digital assistants sound. Together, they point to Google’s effort to bind search, browser and assistant behavior into a more seamless consumer A.I. system.

A.I. Mode Moves From Search Feature to Browsing Companion

Google introduced A.I. Mode in Search in 2025 as an experimental alternative to the familiar list of blue links, offering conversational answers paired with web results. The latest Chrome changes move that concept closer to becoming an always-available browsing companion.

Instead of treating A.I. Mode as something users open, consult and leave behind, Google is now positioning it as a side panel that can remain visible while they read pages and continue asking questions. The feature is designed to reduce what Google describes as tab hopping: the familiar cycle of opening search results, returning to search, refining a query and opening still more pages.

That may sound like a simple interface adjustment, but it has larger implications. Chrome is the world’s dominant browser, and even modest A.I. changes inside it can shape how people gather information online. By keeping A.I. Mode persistently in view, Google is nudging users to conduct more of their research, comparison shopping and follow-up questioning inside a Google-mediated environment rather than across a patchwork of websites and search tabs.

The update also expands what can be fed into that environment. Users can now add recent tabs, images and PDF files into an A.I. Mode query, allowing the system to answer based on a broader bundle of context. In practice, that could mean asking Chrome to compare information across documents, summarize material from an open file, or answer questions grounded in what a user has already been viewing.

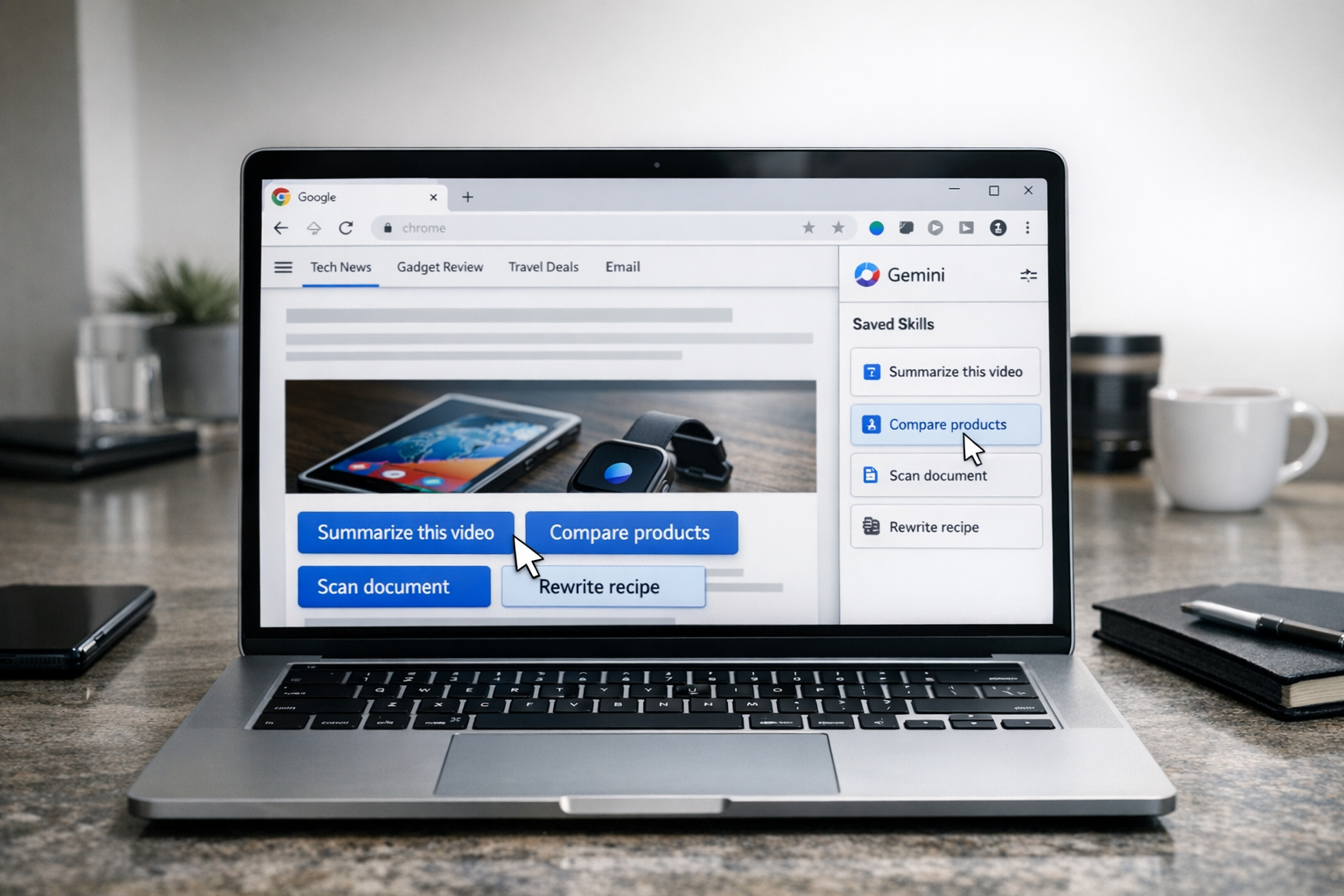

The release follows several related moves. In March, Google said it had expanded “Personal Intelligence” features into A.I. Mode in Search and Gemini in Chrome in the United States. More recently, it added an A.I. Mode shortcut to Chrome on iOS and Android and introduced “Skills in Chrome,” a feature that lets users save and rerun A.I. prompts on webpages. The pattern is one of steady integration: fewer boundaries between browser, search engine and assistant.

Gemini Speech Gets More Directable

If the Chrome update is about persistence, Gemini 3.1 Flash TTS is about control.

The new model, rolling out in preview through the Gemini API, Google AI Studio, Vertex AI and Google Vids, is meant to generate speech that can be steered with detailed natural-language instructions. Rather than selecting from a narrow set of preset voices, developers can specify how a voice should sound and behave — its mood, cadence, accent and emphasis — using prompt-based instructions.

Google says the model supports more than 70 languages and includes native multi-speaker dialogue generation, making it suitable not just for reading text aloud but for producing back-and-forth conversations, narrated content and voice-agent interactions. The company has also said generated audio includes SynthID watermarking, one of Google’s safeguards aimed at identifying A.I.-created media.

Outside developers quickly zeroed in on how unusually granular those controls appear to be. Early examples showed prompts that read almost like stage directions, describing studio atmosphere, vocal energy and regional accents in order to shape the final performance. That level of steerability reflects a broader shift in generative A.I.: models are increasingly being trained not just to produce output, but to follow creative and stylistic instructions with finer precision.

For Google, the appeal is clear. A more expressive speech model can strengthen everything from virtual assistants and customer-service bots to video tools and accessibility features. It also helps Gemini compete in an increasingly crowded field where natural-sounding, emotionally varied voices are becoming a key benchmark.

Why the Timing Matters

The near-simultaneous launches underscore how Google is trying to make Gemini and A.I. Mode reinforce each other across surfaces.

In the browser, A.I. becomes more ambient and persistent. In speech, Gemini becomes more natural and programmable. The combination points toward a future in which a user may search, browse, upload a file, ask follow-up questions and then hear the answer delivered in a chosen voice or style — all within Google’s ecosystem.

That matters in part because the competitive terrain is shifting. Rivals have been racing to build A.I. assistants that are not just smarter but more present: inside operating systems, browsers, productivity suites and smartphones. For Google, whose core business still depends heavily on search and attention, the challenge is to keep users engaged as generative A.I. changes how people seek information.

There is also a business dimension for creators and publishers. A side-by-side A.I. browsing panel could send users to webpages while simultaneously reducing the need to click around as deeply as before. Google has argued that A.I. search tools can help people explore the web more efficiently. But publishers have been watching closely to see whether those tools broaden discovery or compress it, keeping more of the user’s journey inside Google’s own interfaces.

On the speech side, the opportunity comes with familiar concerns. More flexible voice generation can enable richer products, but also sharper questions around misuse, including impersonation and deceptive synthetic media. Google’s watermarking is intended as a safeguard, though such protections have yet to settle debates over how enforceable or visible they are in real-world distribution.

An Ecosystem Strategy, Not Just a Product Update

Viewed together, the announcements are less about two isolated features than about an ecosystem strategy.

Google is weaving A.I. into the moments that already define its consumer reach: the search query, the browser tab, the uploaded document, the spoken response. The company appears to be betting that the next phase of A.I. adoption will not come only from standalone chatbots, but from making assistance feel native to everyday tools people are already using.

Whether users embrace A.I. Mode as a constant companion inside Chrome remains uncertain, and it is still unclear how quickly these features will expand beyond the United States. It is also too early to know whether Gemini 3.1 Flash TTS will stand out in daily use as much as it does in early demonstrations.

But the direction is unmistakable. Google is not merely adding more A.I. features. It is trying to make the browser itself a living interface for Gemini — one that can read with you, search with you and, increasingly, speak for you.

Sources

Further reading and reporting used to add context:

- Google upgrades AI Mode in the Chrome browser

- https://blog.google/innovation-and-ai/technology/ai/google-ai-updates-march-2026/

- https://blog.google/products/chrome/google-chrome-web-store-redesign/

- https://blog.google/products/search/ai-mode-expands-languages-locations/

- https://blog.google/products/search/ai-mode-multimodal-search/

- https://blog.google/products-and-platforms/products/search/ai-mode-search/

- https://blog.google/products/chrome/ai-mode-in-chrome-ios-android/

- https://blog.google/innovation-and-ai/technology/developers-tools/gemma-3/

- https://blog.google/products/search/search-live-ai-mode/

- https://blog.google/intl/nl-nl/product/zoeken-kijken/gemini-wordt-persoonlijker-proactiever-krachtiger/

- https://blog.google/technology/ai/try-bard/

- https://blog.google/products-and-platforms/products/google-ar-vr/exploring-virtual-worlds-webvr-and-matterport/

- https://blog.google/documents/37/How_Google_Fights_Disinformation.pdf

- https://blog.google/documents/77/Googles_submission_to_EC_AI_consultation_1.pdf

- https://blog.google/documents/83/information_quality_content_moderation_white_paper.pdf

- https://blog.google/innovation-and-ai/technology/developers-tools/build-with-gemini-3-1-flash-live/

- https://blog.google/innovation-and-ai/models-and-research/gemini-models/gemini-3-1-flash-live/

- https://blog.google/innovation-and-ai/technology/developers-tools/gemini-2-5-text-to-speech/

- https://blog.google/innovation-and-ai/models-and-research/gemini-models/gemini-3-1-flash-lite

- https://blog.google/products-and-platforms/products/gemini/gemini-3-flash/

- https://blog.google/innovation-and-ai/models-and-research/google-deepmind/google-gemini-updates-io-2025/

- https://blog.google/innovation-and-ai/models-and-research/google-deepmind/google-gemini-ai-update-december-2024/

- https://blog.google/products-and-platforms/products/gemini/

- https://blog.google/products-and-platforms/products/gemini/gemini-3/

- https://blog.google/products/gemini/gemini-3-flash-gemini-app/

- https://blog.google/products/gemini/gemini-3/

- https://blog.google/documents/213/AI_Sprinters_Report.pdf

- https://blog.google/products-and-platforms/products/chrome/skills-in-chrome/

- https://blog.google/innovation-and-ai/technology/developers-tools/full-stack-vibe-coding-google-ai-studio/

- https://blog.google/products-and-platforms/products/chrome/google-chrome-performance-controls-october-2024/

- https://blog.google/products/chrome/new-ai-features-for-chrome/

- https://blog.google/innovation-and-ai/technology/developers-tools/gemini-cli-extensions/

- https://blog.google/innovation-and-ai/products/gemini-api-developers-cloud/

- https://blog.google/innovation-and-ai/technology/ai/nano-banana-2

- https://blog.google/products-and-platforms/devices/chromebooks/chromebook-plus-google/

- https://blog.google/products-and-platforms/products/gemini/google-gems-tips/

- https://blog.google/products/chromebooks/chromebook-plus-google/

- https://blog.google/products/chrome/chrome-reimagined-with-ai/

- https://www.blog.google/documents/53/FINAL_External_Distributed_Work__Google_Playbooks.pdf

- https://blog.google/documents/159/MBG_Podcast_S2E5_Android_Security.pdf

- Expanding AI Overviews and introducing AI Mode

Leave a Reply